Those in the data science business realize that taking on important big data projects for business requires a structured process or life-cycle, to use a catchphrase, that includes five major stages. It’s the second, Data Acquisition and Understanding, we’re concerned with at this juncture. Within this stage there are three primary steps, or tasks to accomplish in order to meet the dual goals of 1) produce high-quality data that clearly relates to the target variables, and 2) develop a data pipeline solution that refreshes the data regularly and allows for artificial learning.

Ingest the Data

The first step in our Data Acquisition and Understanding process involves establishing a procedure that allows you to be able to move the data from where it is (the source location) to where you want it to be (the target location). Before analysis, training, or any sort of predictive activities can take place, be concerned with how you’ll be able to effectively select and move the data sets you need.

Explore the Data

Step number two in the process involves developing a solid understanding of the data. Data collected in the real world is far from perfect. It may be incomplete, full of distractions, or contain any number of other problems. This is the auditing process. It may be necessary to accomplish it in iterations before what you’re working with is clearly understood and you’re ready to introduce to the modeling process.

The reality is the data will likely need to be cleaned before it’s of much use. The phrase “Garbage In, Garbage Out” definitely applies here. The cleaning process is an article (or more) unto itself. Here are some of the tasks to focus on. Once you’re working with clean data, it’s time to step back and look for existing patterns. The goal here is to note any naturally existing connections between the data set and the target model you want to apply it to. Is there enough data to accomplish the goal and move forward?

The iterative nature of this step should be evident as well. You might have perfectly clean data but it just doesn’t match well with the modeling that is intended. There’s a distinct possibility you’ll have to go back and look for new or better data sources that will either augment or replace the first identified set.

Creating a Data Pipeline

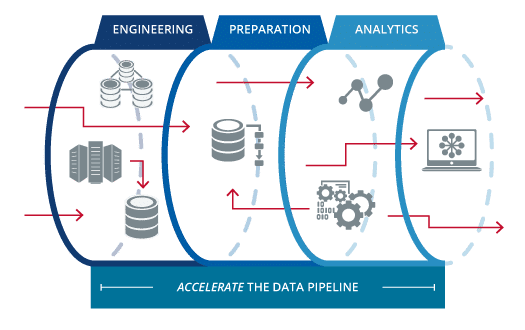

After your data is ingested and cleaned, expect that the next step will be to create the process by which new incoming data is integrated into the working model through regular scoring and refreshing measures. In this sense, the data pipeline is simply an organized workflow that all team members are familiar with. It should be an automatic strategy that takes new data from various sources and prepares it for use in the ongoing learning process. There are various designs this pipeline might take. The three most common are:

- batch-based

- streaming or real-time

- a hybrid of the two

Constraints of the present system as well as the specific needs of your business, obviously, play a large role determining the ultimate architecture of the pipeline.

Deliverables

As we come to the end of this stage, there are three deliverables that should be complete before proceeding to the next major stage, Modeling. They are:

Data Quality Report: This report should include attribute and target relationships, variable ranking, and data summaries at the least, but can cover much more ground if you need it.

Solution Architecture: This should contain a description of your data pipeline that you use to build predictive solutions based on new data after the model is complete. The pipeline to retrain your model on the basis of new data should be included as well.

The Big Decision: Some call this the checkpoint decision. The bottom line is that it serves as a place to stop and evaluate what you’ve done and what you expect to accomplish in the future. Ultimately, now is the time to cut short the project if the returns don’t justify the cost and labor time involved. Your basic choices are to proceed, collect more data, or give up the project.